Mathematics of Abstract World Models

Expert Level ⭐⭐

While LLMs have made massive strides, they remain hindered by high training costs and a lack of grounded, experiential reasoning. This has sparked a surge in World Model research—specifically Abstract World Models—which leverage advanced geometric and mathematical frameworks to bridge the gap between statistical prediction and true autonomous reasoning.

📐 Mathematics of Abstract World Models

Overview

Applicability

Overview

Applicability

Overview

Applicability

🧩 Appendix

🛠️ Q & A

🎯 Why this Matters

Purpose: Although Large Language Models (LLMs) have advanced significantly in their reasoning and multimodal capabilities, they still face several fundamental limitations that prevent them from being fully autonomous or universally reliable.

Interest in World models have been growing in recent years to address LLMs shortcomings with noisy, uneven and in some case scarce data, lack of interpretability, lack of experiential knowledge of the observed world and costly training. This article deals into Abstract World Models and their reliance of advanced geometric and mathematical concepts to deliver true reasoning capabilities.

Audience: Scientists and engineers investigating World Models as an option to use representation of the natural, observed world as their models.

Value: Learn an overview of World Model principles, highlighting the shift toward Abstract architectures. We examine established models to illustrate how Differential Geometry and Topology provide the necessary geometric structure for sophisticated latent-space reasoning

🌐 World Models

World model learning can capture meaningful representations by embedding complex, high-dimensional data into lower-dimensional abstract spaces that reside in the latent space [ref 1, 2, 3].

📌 Contrary to the popular narrative that World Models are a brand-new breakthrough, the field has actually been evolving since 2016—and arguably even earlier.

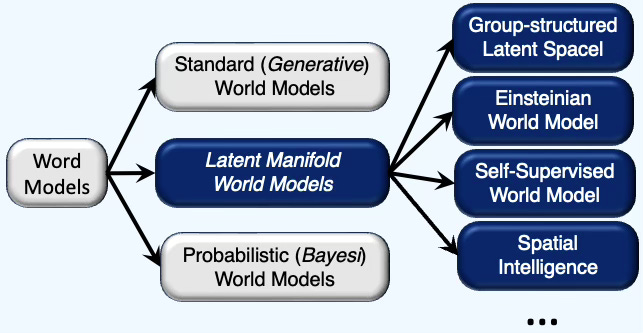

There are many ways to categorize world models as illustrated in the diagram below.

This review focuses on three primary architectural frameworks for world models:

Standard (or Generative) World Models: Systems designed to reconstruct or predict high-dimensional sensory input.

Latent Manifold World Models: Architectures like JEPA or Spatial Intelligence that prioritize representation learning within a latent space.

Probabilistic World Models: Frameworks that utilize uncertainty estimation and probabilistic inference to navigate the environment.

⚠️ This classification of world models, though arbitrary, is intended to highlight the underlying relationship between these architectures and the principles of Differential Geometry, Graph Theory and Topology. The following list of world models is far from being exhaustive!

Standard World Models

Introduction

The standard (generative) World Model is a neural network designed to understand and simulate the dynamics of the observed world, including its physical and spatial properties:

Reconstruction Goal: These models often use a Generative approach, where they attempt to reconstruct original data, such as future video frames or images, from a compressed “latent” representation.

Multimodal Input: They typically process video, sensor streams (like LiDAR), and sometimes language to create a unified internal simulation.

Simulated Training: By predicting future outcomes, an agent can “dream” or plan in a virtual sandbox, which reduces the need for dangerous or expensive observed-world trials.

Common Architectures: Standard world models frequently utilize Variational Autoencoders (VAE) for state compression and Recurrent Neural Networks (RNN), LSTM or Transformers to predict how those states change over time.

We described two examples of architectures of generative world models:

Traditional (or Original) World Models

OpenAI’s Sora

Generative World Models

Introduced by Ha & Schmidhuber [ref 4] and often cited as the catalyst for the modern interest in this field, this model decomposed the problem into three distinct components managed/orchestrated by an agent:

The Vision Model (V): A Variational Autoencoder (VAE) that compresses raw pixel input into a small latent vector z

The Memory Model (M): A Recurrent Neural Network (RNN) that predicts the next z based on previous states and actions.

The Controller (C): A simple linear model that decides which action to take based solely on the internal representations provided by V and M.

OpenAI’s Sora

Although primarily discussed as a video generation tool, OpenAI describes Sora as a “world simulator” [ref 5].

Spatiotemporal Patches: It treats video as a collection of 3D patches, allowing it to model the persistence of objects and physical interactions over time.

Physics Heuristics: While it doesn’t have an explicit physics engine, its scale allows it to “learn” certain physical properties, like gravity and fluid dynamics, through observation.

Probabilistic World Models

This model was proposed by Yoshua Bengio [ref 6]. Unlike the JEPA approach, which focuses on latent consistency, or Fei-Fei Li’s Spatial Intelligence, which focuses on 3D geometric realism, Bengio’s model focuses on epistemic uncertainty—the model’s ability to know what it does not know,

This is Bayesian probabilistic model that forms hypotheses about the world, and estimates the posterior probabilities of those hypotheses using prior probabilities and Bayesian inference from evidence. The world model could have strong prior probability of the known laws of physics, geometry and so on.

📌 It is reasonable to categorize Bengio’s Bayesian World Model as an abstract. I treat is a separate category because if unique focus on safety and causality.

Latent Manifold World Models

Introduction

Latent Manifold World Models - sometimes referred as Abstract World Models - are structured representations that purposely omit unnecessary details to focus on key patterns, symmetries, and causal structures.

No Reconstruction: Unlike generative models, abstract models like the Joint-Embedding Predictive Architecture (JEPA) do not reconstruct the complete image or video frame. Instead, they predict missing parts or future states directly within an abstract representation space.

Efficiency: By ignoring irrelevant details (like the exact shape of a cloud or the texture of a wall that doesn’t affect a robot’s path), these models operate at a higher level of abstraction and are much more computationally efficient.

Latent Reasoning: The “center of gravity” shifts from generating data to reasoning in latent space, allowing the model to focus on the semantic meaning of a scene rather than its pixel-perfect appearance.

Group Symmetries: These models often incorporate formal mathematical foundations, such as Equivariant Transition Structures, to ensure that learned representations respect physical symmetries like rotation or translation.

Let’s introduce 3 well-known Abstract World Models

Joint Embedding Prediction Architecture

Markov Decision Process Abstraction

Spatial Intelligence

Joint Embedding Prediction Architecture (JEPA)

Unlike generative models that rely on observation-level reconstruction, Joint Embedding Predictive Architectures (JEPAs), introduced by Yann LeCun, optimize for representation consistency across multiple data views in the latent manifold [ref 7]. This approach avoids the computational burden of density estimation, enabling the encoder to capture abstract, task-invariant features. By operating independently of raw input constraints, the architecture offers superior flexibility in feature encoding. JEPA embeds features that are useful for predicting the next state while discarding unpredictable features.

JEPAs are particularly relevant in processing geometric priors and manifolds because they treat the latent space as the primary arena for learning.

📌. The earliest and most common JEPAs models are purely self-supervised and do not use Reinforcement learning (RL) during pre-training. However, some of the latest variants explicitly integrate RL.

Group-structured Latent Spaces

Geometric priors ensure that an agent’s internal representation of states and actions respects the underlying symmetry of its environment. Because a Markov Decision Process typically consists of a mix of symmetric and non-symmetric elements, embedding these as structural priors within the latent space allows the agent to generalize more efficiently from limited experience.

The two main characteristics are

Geometric Priors: These models encode SE(3) (3D Euclidean motions) or SO(3) (rotations) directly into the latent space.

Equivariance: If an object moves in the real world, its representation in the latent space moves along the manifold according to the laws of symmetry. This allows the model to generalize “object permanence” and “physics” across different viewpoints without needing billions of examples.

Einsteinian World Model

This model explicitly learns globally consistent solutions using the Space-Time manifold.

Metric Learning: Instead of learning abstract features, the network predicts the local metric tensor—the mathematical object that defines distances and curvature—across overlapping coordinate patches.

Curvature as Loss: The model adds the Einstein field equations to the training loss to ensure the world model is physically valid. The loss (energy) increases if it predicts a move that violates the curvature rules of its space-time manifold.

Global Consistency: To prevent the model from becoming a “bag of heuristics,” it enforces consistency between patches, ensuring the latent space is a smooth, continuous manifold.

Spatial Intelligence

Spatial Intelligence models developed by Dr Fei-Fei Li, focuses on understanding of 3D space and time [ref 8]. Unlike LLMs that process tokens, Spatial Intelligence models build persistent 3D worlds to interact with the physical world. These simulated worlds represent the geometric structure of the observable world in represented in the latent space. Therefore, these spatial models are inherently multimodal.

These models incorporate geometry and physical laws to predict an action and the resulting next state. The latent space is often structured as a 3D/4D occupancy grid or a neural radiance field (NeRF). It isn't just a vector of numbers.

Conceptually, Spatial Intelligence is an abstract world model because it aims to provide the "scaffolding" for cognition. Like the JEPA family, it seeks to move beyond pixel-level correlations toward a deep understanding of physical laws, causality, and geometry.

👉 For the reminder of this article we focus on the Abstract World Models and more specifically JEPA and Group-structured Latent Space Model to illustrate the role of advanced mathematics.

🧮 Latent Manifold Models

Overview

There is currently no universal, strictly standardized definition of an “abstract world model” in the AI research community. While the term is widely used, its boundaries shift depending on whether the researcher focuses on generative reconstruction or latent prediction.

However, the community generally agrees on several core functional pillars that characterize these architectures:

Core Functional Pillars

Latent State Representation: They must compress high-dimensional sensory data into a lower-dimensional “latent” manifold.

Temporal Dynamics (Memory): They must include a component that predicts how the latent state evolves over time, often based on internal physics or learned transitions.

Predictive Ability: A true world model must be able to “hallucinate” or simulate future outcomes without receiving new external data.

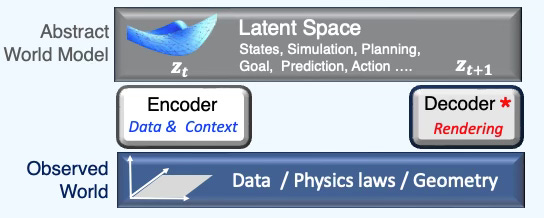

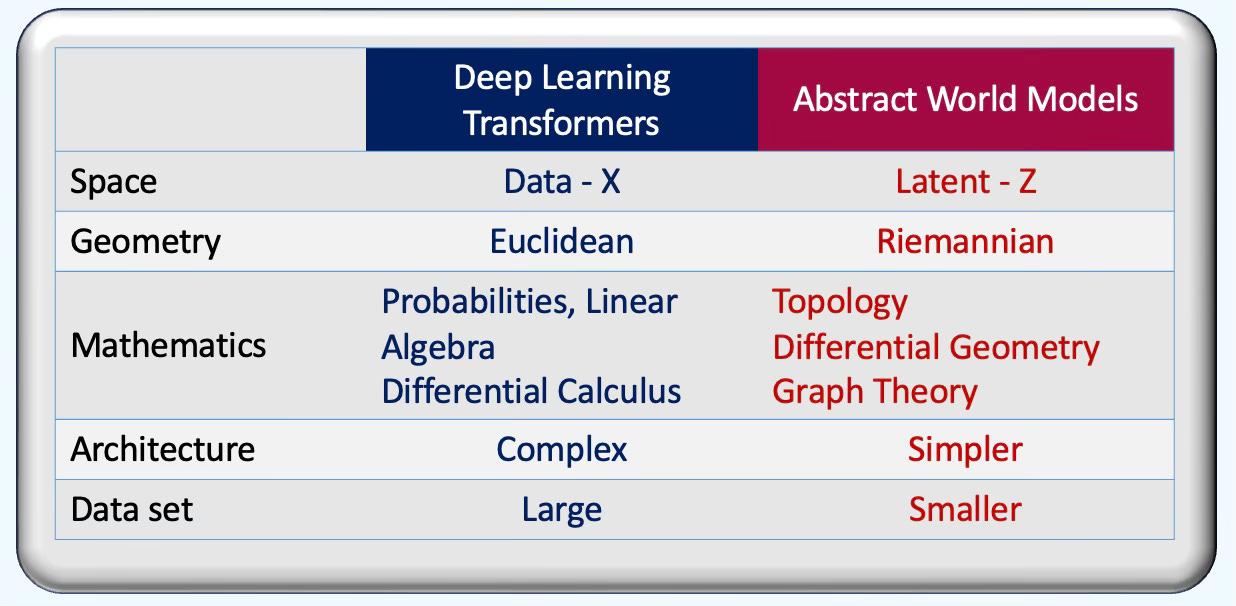

To understand the shift toward Abstract World Models, we must contrast them with traditional deep learning and standard Transformer architectures. As the following table illustrates, the fundamental divergence lies in their geometric assumptions—moving from flat Euclidean spaces to smooth or discrete manifolds—and the underlying mathematical frameworks that govern their representations.

Latent Representation

Let’s consider a bouncing ball. Each video frame consists of millions of pixel values changing every millisecond. However, the underlying attributes are its position (Geometry), and its velocity, gravity (Physics Law). The latent space is the mathematical space that maps the pixels into these underlying attributes.

One established fact is that entities and transformations/operations on the latent space is critical to abstract world models. The abstract world model replicate & compress the observed world (data, physics laws, geometric constraint, prior knowledge ….) into the latent space along with the target.

Therefore, the state of the ‘world’ is predicted as a latent variable and loss/objective are computed in a low dimensional manifold.

📌 A significant number of world models enforce geometric priors in the latent space through regularization term in the loss function in training

Invariance constraint

Equivariance constraint

The decoder reconstructs or renders the updated latent state back to the observed world.

How do we define the latent space in this context? It is best understood through three distinct lenses:

Geometry: Representations are constructed as smooth or discrete manifolds, meshes, and graphs, or within topological domains such as hypergraphs and simplicial complexes.

Variables: The space serves as a container for latent variables, including states, rewards, objectives, and operational constraints.

Activities: This low-dimensional manifold provides the playground for computation, where prediction, planning, simulation, and optimization are executed with high efficiency.

⚠️. When learning state representations, the most significant obstacle is the collapse of the latent space. This occurs when the encoder maps distinct data points x to an identical or nearly identical embedding z, causing the model to lose the ability to differentiate between unique inputs.

Furthermore, even if the embeddings are distinct, the model may fail to properly preserve the geometric distances between states during the prediction process, leading to inaccurate simulations of reality.

Group-structured Latent Space Models

World Models are advanced AI systems learning the rules of reality (physics, cause-and-effect) from data like videos to simulate and predict dynamic 3D environments, enabling more robust reasoning, planning, and creation beyond static content [ref 9].

World Models go beyond Large Language Models (LLMs) by understanding spatial relationships and physical interactions, allowing AI to generate immersive, interactive worlds for robotics, design, medicine, and complex problem-solving, representing a significant leap towards human-level intelligence.

🤖 For math-minded readers

Let’s consider the Markov Decision Process (MDP) fully defined by a state space S, an action space A, a reward function R and a state transition T

A self-supervised world model is defined in a latent space Z with the following learnable components

Learnable encoding that projects states into a low-dimensional manifold

Learnable transition function

Learnable reward function

JEPA

JEPA’s approach avoids the computational burden of density estimation, enabling the encoder to capture abstract, task-invariant features [ref 10, 11].

📌 The original JEPA framework has evolved into a diverse ecosystem of specialized architectures. Notable variants include V-JEPA for video, LeJEPA, and Hierarchical JEPA, alongside targeted iterations such as Multi-Resolution, Cell-JEPA, and Lp-JEPA.

The following mathematical formalism reflects the original definition of JEPA

🤖 For math-minded readers

In JEPA and its video-based extension (V-JEPA), the core mathematical framework is Energy-Based. The energy is minimized in the latent space. The energy measures the L2 norm of the predicted latent representation and the target latent representation

x: Input and contextual data tensor

y: Target (labeled) data

z: Latent vector

sx Latent representation of the context/data from the input encoder

sy Latent representation of the target from the target encoder

Pred: Prediction neural model for the latent state using the latent feature tensor and the latent variable z

Let’s look at the predictor model. It simply generates an estimate of the target latent state from the contextual features sx and the latent variable z

JEPA uses variance-based regularization as architectural constraint to prevent information to collapse [ref 11, 12]

📌 Traditionally, self-supervised deep learning models would use contrastive learning.

The loss known as the Variance-Invariance-Covariance Regularization component is expressed as:

lambda, mu, nu: Hyperparameters of the model

Inv: Invariance as the mean-squared error between two batch of embeddings

v: Variance as the hinge loss pushing the standard deviation of each embedding dimension to be above a certain threshold

C Covariance that penalizes the off-diagonal elements of the covariance matrix of the embeddings

Z, Z’: Batch of embeddings

n: Size of batch

d: Dimension of the latent variable

The total loss is then computed as

The weights of the target encoder are computed using a simple exponential moving average on the context weights theta

The following diagram illustrates the 3 top components of an abstract world model: Encoder, Latent space and Decoder with associated energy minimization, and actuation policy.

📌 Beyond JEPA and Markovian frameworks, the landscape of abstract world models includes several specialized paradigms, such as Equivariant World Models [ref 12] , Symplectic World Models, [ref 13] and Spacetime World Manifolds [ref 14] . A technical overview of these alternatives is provided in the Appendix.

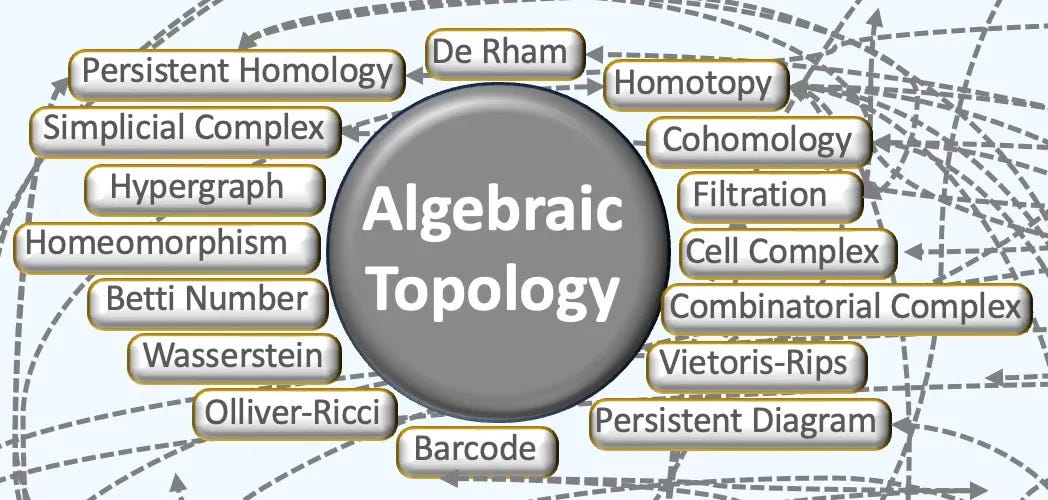

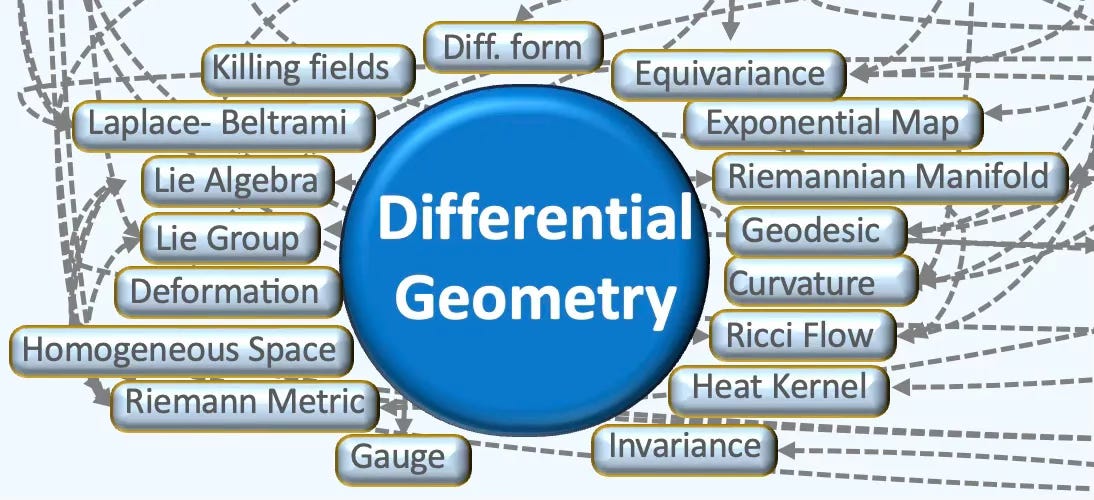

📐 Mathematics of Abstract World Models

The latent space serves as the functional arena for prediction and planning, relying on structures like graphs, manifolds, and simplicial complexes. Understanding the underlying mathematics—specifically Differential Geometry for local curvature, invariance and equivariance, Algebraic Topology for global connectivity, and Group Theory for symmetry—is vital for anyone looking to build or optimize models within these non-Euclidean spaces.

📌 This section examines abstract world models that utilize smooth or discrete manifolds to represent the world within a latent space. While standard world models typically employ reinforcement learning techniques—such as Policy Optimization or Q-Learning—directly on these latent representations, this framework explores the geometric properties of the underlying manifolds.

In world models, the shift from processing pixels to understanding/modeling world states relies heavily on specific structures from differential geometry, topology, and graph theory

Differential geometry, topology, category theory and graph theory are the underpinning of geometry deep learning and described in details in a previous article Demystifying the Math of Geometric Deep Learning

Here is a summary of these fields.

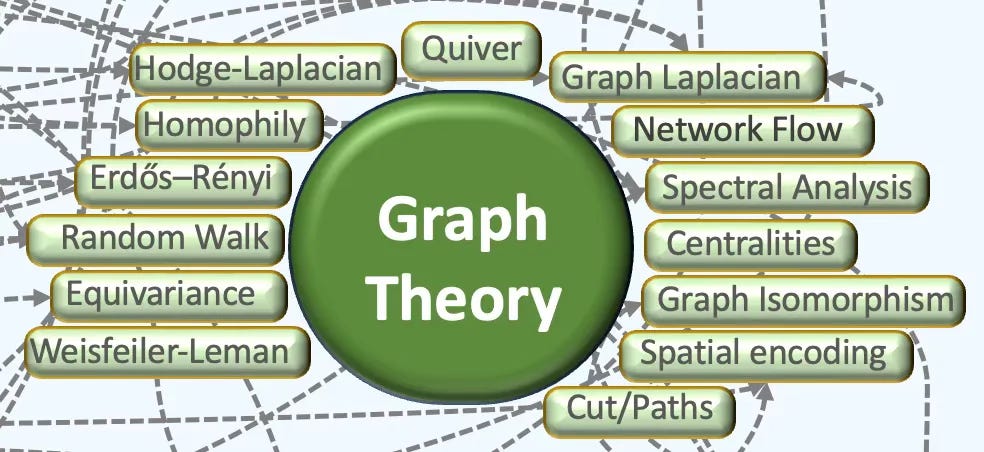

🔗 Graph Theory

Overview

Graph theory concerns mathematical graphs used to encode pairwise relations. A graph comprises vertices and edges; a digraph differs from an undirected graph in that each edge is oriented [ref 15].

Here are basic concepts in Graph Theory that are applicable to Abstract World Models:

Graph descriptors include paths, cycles (including loops), distances, in/out degrees, and centrality indices such as betweenness, closeness, and density.

Adjacency matrices are standard representations using the edge list (source–target pairs), and the adjacency list (node with its neighbor set).

Homophily factor assesses similarity between a node (or edge) and its neighborhood.

Spectral graph theory studies how a graph’s structure is reflected in the eigenvalues and eigenvectors of its associated matrices—especially the adjacency and (normalized) Laplacian. The spectrum (the multiset of eigenvalues) is invariant under graph isomorphisms.

Spectral decomposition expresses a diagonalizable graph matrix as (UDU⊤ for symmetric cases), separating eigenvalues and eigenvectors.

Applicability

Discrete Ricci Curvature: The discrete Ricci curvature (Ollivier-Ricci formulation using Wasserstein distance) is used to extract bottleneck in information flow within a graph. High curvature areas often correspond to critical decision points or physical boundaries in a spatial environment.

Expander Graphs: Used in the design of the connectivity of world models to ensure that information can propagate quickly without the latent space collapsing.

Graph Isomorphism: Mathematical basis for a model to recognize that two layouts of the world are structurally similar despite different dimensions. Graph isomorphism relies on Weisfeiler-Leman algorithm

🍩 Algebraic Topology

Overview

General Topology and Algebraic Topology in particular are the foundation of Topological Deep Learning [ref 16].

Here are basic concepts in topology that are applicable to Abstract World Models:

Point-set (general) topology treats the foundational definitions and constructions of topology. Key concepts include continuity (pre-images of open sets are open), compactness (every open cover admits a finite sub-cover), and connectedness (no separation into two disjoint nonempty open subsets).

A topological space is a set equipped with a topology—a collection of open sets (or neighborhood system) satisfying axioms that capture “closeness” without distances. It may or may not arise from a metric. Common examples include Euclidean spaces, metric spaces, and manifolds.

Homeomorphism or topological equivalence is defined as a bijective, continuous map with continuous inverse between two spaces that are considered similar. Algebraic topology seeks invariants that do not change under such equivalences.

Homotopy theory is a branch of algebraic topology that studies the properties of spaces that are preserved under continuous deformations, called homotopies.

The Betti number is a key topological invariant that count the number of certain types of topological features in a space.

A Simplicial Complex is a generalization of graphs that model higher-order relationships among data elements—not just pairwise (edges).

Cochains are signal or function that map, known as Cochain Map a combinatorial complex of rank k to a real vector. Cochains defines the Cochain Space.

Homology/Persistent Homology decomposes topological spaces into formal chains of pieces. A barcode represents the appearance/disappearance of these structure features (topological elements) given a radius.

A Cohomology from a cochain complex gives algebraic invariants of a space.

Filtrations of a space across scales give rise to persistent homology, summarizing the birth and death of features; distances between summaries are stable under small perturbations.

Applicability

Persistent Homology: This is used to identify the structures in data across different scales. In spatial intelligence, it helps an AI distinguish between a solid wall and a doorway by analyzing the topological connectivity of the mapped environment.

Quotient Spaces: They are used to collapse irrelevant information. For example, a world model might treat all objects as the same point in a high-level topological map, ignoring the specific attributes or noise.

Homeomorphisms: Two agents may interact through a homeomorphism (a continuous mapping)—between their two different internal world models so they can coordinate.

🌀 Differential Geometry

Overview

Differential geometry studies smooth spaces (manifolds) by doing calculus on them, using tools like tangent spaces, vector fields, and differential forms. It formalizes intrinsic notions such as curvature, geodesics, and connections—foundations for modern physics and for algorithms in graphics, robotics, and machine learning on curved data [ref 17].

Here are basic concepts in differential geometry that are applicable to Abstract World Models:

A manifold is a topological space that, around any given point, closely resembles Euclidean space. Specifically, an n-dimensional manifold is a topological space where each point is part of a neighborhood that is homeomorphic to an open subset of n-dimensional Euclidean space. Examples of manifolds include one-dimensional circles, two-dimensional planes and spheres, and the four-dimensional space-time used in general relativity.

Smooth or Differential manifolds are types of manifolds with a local differential structure, allowing for definitions of vector fields or tensors that create a global differential tangent space. A Riemannian manifold is a differential manifold that comes with a metric tensor, providing a way to measure distances and angles.

The tangent space at a point on a manifold is the set of tangent vectors at that point, like a line tangent to a circle or a plane tangent to a surface. Tangent vectors can act as directional derivatives, where you can apply specific formulas to characterize these derivatives.

A geodesic is the shortest path (arc) between two points in a Riemannian manifold. Practically, geodesics are curves on a surface that only turn as necessary to remain on the surface, without deviating sideways. They generalize the concept of a “straight line” from a plane to a surface, representing the shortest path between two points on that surface.

An exponential map turns an infinitesimal move on the tangent space into a finite move on the manifold. in simple terms, given a tangent vector, v the exponential map consists of following the geodesic in the direction of v for a unit of time.

A logarithm map brings a nearby point back to the tangent plane to define the tangent vector. It consists of finding the geodesic between two points on a manifold and extract is tangent vector.

Curvature measures the extent to which a geometric object like a curve stray from being straight or how a surface diverges from being flat. It can also be defined as a vector that incorporates both the direction and the magnitude of the curve’s deviation.

Applicability

The most direct application in Abstract World Models is the concept of the Latent Manifold.

Riemannian Manifolds: It is assumed that high-dimensional data ) sits on a lower-dimensional smooth manifold for which the distance between two states in a world model is a geodesic—the shortest path along the curved surface of the manifold.

Lie Groups and Lie Algebras: Agents understand rotation, translation, reflection or any affine transformation, modeled as Lie groups. Spatial intelligence uses these to maintain equivariance—ensuring that if an object moves in the real world, its representation moves predictably in the latent space.

Ricci Curvature and Riemannian Metric: These characteristics of smooth manifolds are used to identify the low dimensional space of the state of the world and compute embeddings (Encoder).

🧠 Key Takeaways 💎

✅ Research Trajectory: World models represent a rapidly evolving frontier, with novel methodologies and applications emerging weekly.

✅ Architectural Taxonomy: These frameworks are broadly classified into three paradigms: generative, latent-predictive, and Bayesian.

✅ Compression & Fidelity: By encoding raw data alongside physical laws, geometric constraints, and prior knowledge, these models create a compressed mirror of reality.

✅ Geometric Foundations: Abstract world models are formally structured as manifolds within a high-dimensional latent space.

✅ Mathematical Underpinnings: The execution of predictive and generative tasks on these manifolds necessitates advanced frameworks, including differential geometry, topology, and graph theory.

📘 References

World Models: The Next Frontier in Our Path to AGI is Here - I. de Gregorio - Medium, 2023

Nvidia Glossary: World Models Nvidia 2026

AI and World Models. - R. Worden - Active Inference Institute, 2026

World Models D. Ha, J Schmidhuber, 2018

Sora as a World Model? F. D. Puspitasar et all, 2026

Can a Bayesian Oracle Prevent Harm from anAgent? - Y. Bengio et all, 2024

LeJEPA: Provable and Scalable Self-Supervised Learning Without the Heuristic - R. Balestriero, Y. LeCun, 2025

From Words to Worlds: Spatial Intelligence is AI’s Next Frontier - Dr. Fei-Fei Lie - Substack, 2025

Learning Abstract World Models with a Group-Structured Latent Space - T. Delliaux, N-K Vu, V. Francois-Lavet, E. Van der Pol, 2025

VJEPA: Variational Joint Embedding Predictive Architectures as Probabilistic World Models - Y. Huang, 2026

VICReg: Variance-Invariance-Covariance Regularization for Self-Supervised Learning - A. Bardes, J. Ponce, Y. LeCun, 2021

Flow Equivariant World Models: Memory for Partially Observed Dynamic Environments - H.J. Lillemark, B. Huang, F. Zhan, Y. Du, T. Anderson Keller, 2025

Symplectic Generative Networks (SGNs): A Hamiltonian Framework for Invertible Deep Generative Modeling - A. Aich, A. B. Aich, 2025

On the Spatiotemporal Dynamics of Generalization in Neural Networks - Z. Wei, 2026

Demystifying the Math of Geometric Deep Learning-Graph Theory - - Hands-on Geometric Deep Learning, 2025

Demystifying the Math of Geometric Deep Learning-Topology - Hands-on Geometric Deep Learning, 2025

Demystifying the Math of Geometric Deep Learning-Differential Geometry - Hands-on Geometric Deep Learning, 2025

🧩 Appendix

Here are few noticeable additional world models.

Just-in-Time World Model

The Just-in-Time (JIT) model uses a local visual scan to quickly flag high expected-utility objects relevant for future steps of simulation. Details about the environment are added to a construal only when they are relevant to immediate next steps of the current simulation. A construal is the result of iteratively adding relevant objects to memory throughout a single simulation.

Equivariant World Models

These models rely on geometric symmetry priors such as group invariance or equivariance to enforce spatial transformations on the latent space. The latent state should not be affected by translation, rotation, reflection or any affine transformations. This is accomplished by regularizing through the manifold using Laplace-Beltrami operator or Laplacian eigenvectors to ensure smooth transition between states.

Symplectic World Models

These models attempt to represent physical law as symplectic geometry using Hamiltonian system.

Spacetime World Manifolds

The purpose is to represent the physical world as a 4D differential pseudo-Riemannian manifold where 3D space and 1D time are merged, with curvature produced by energy and matter representing gravity. These models rely usually on the Lorentzian metric.

🛠️ Q & A 💎

Q1: How does the latent space geometry in Abstract World Models diverge from the Euclidean assumptions found in standard Convolutional or Transformer architectures?

Q2: What are the three key components of an agent in the architecture of the Ha & Schmidhuber (2018) world model?

Q3: To what extent do Abstract World Models prioritize the reconstruction of raw sensory data (e.g., images or video) compared to latent-only representations?

Q4: Within the JEPA framework, what is the specific nature of the output generated during a prediction, planning or simulation phase?

Q5: What are some of the geometric representations commonly employed to structure the latent spaces of Abstract World Models?

Q6: Can you list some of the fields of mathematics that might be involved with the design of Abstract World Models?

👉 Answers

💬 News & Reviews 💎

This section focuses on news and reviews of papers pertaining to geometric deep learning and its related disciplines.

Paper Review: The Riemannian Geometry of Deep Generative Models H. Shao, A. Kumar, P. Thomas Fletcher

The goal of this paper is to develop two fundamental algorithms for manifold-based learning:

Geodesic computation — estimating geodesic curves on a manifold.

Parallel transport — moving/translating tangent vectors along a path on the manifold.

This work is grounded in the manifold hypothesis, which states that high-dimensional data can be represented through a mapping (or decoding) from a low-dimensional latent space. The author emphasizes that the Jacobian of the neural network transformation in a hidden layer maps tangent vectors in latent space to tangent vectors on the data manifold—using the Riemannian metric to define this pushforward operation.

1. Discrete Geodesic Computation

Direct calculation of geodesics on a manifold is computationally expensive due to curvature terms and Christoffel symbols. The paper instead uses a discrete geodesic approximation, relying on finite-difference schemes and numerical estimates of the velocity field. Additionally, the Jacobian required for the calculation can be efficiently obtained via an encoder network.

2. Parallel Transport

Once the geodesic path is discretized, tangent vectors are transported along this path in three stages:

a. Estimate the initial velocity of the geodesic.

b. Parallel transport the velocity along the computed geodesic.

c. Perform geodesic shooting to generate a new geodesic segment—implemented via a Singular Value Decomposition–based procedure.

Experiments

The two algorithms are evaluated on both synthetic manifolds and real-world datasets (CelebA, SVHN). Applications such as geodesic interpolation and Fréchet mean estimation demonstrate substantial performance gains (e.g., higher R^2 cores) compared with linear or Euclidean baselines.

Reader classification rating

⭐ Beginner: Getting started - no knowledge of the topic

⭐⭐ Novice: Foundational concepts - basic familiarity with the topic

⭐⭐⭐ Intermediate: Hands-on understanding - Prior exposure, ready to dive into core methods

⭐⭐⭐⭐ Advanced: Applied expertise - Research oriented, theoretical and deep application

⭐⭐⭐⭐⭐ Expert: Research , thought-leader level - formal proofs and cutting-edge methods.

Share the next topic you’d like me to tackle.

Patrick Nicolas is a software and data engineering veteran with 30 years of experience in architecture, machine learning, and a focus on geometric learning. He writes and consults on Geometric Deep Learning, drawing on prior roles in both hands-on development and technical leadership. He is the author of Scala for Machine Learning(Packt, ISBN 978-1-78712-238-3) and the newsletter Geometric Learning in Python on LinkedIn.

“All models are wrong, but some are useful.” — George Box